The perils of image manipulation in science

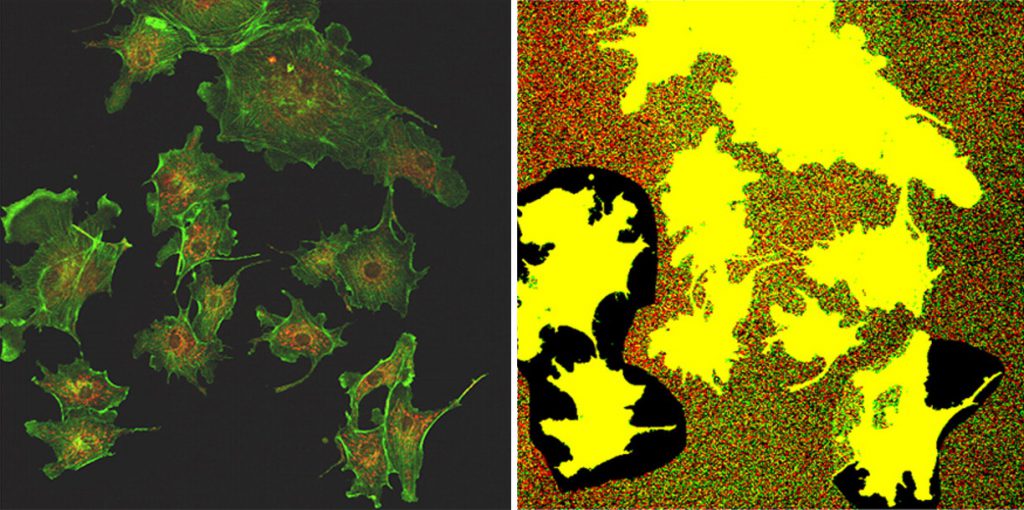

Volume 119, No 38, Figure 1. To most people, this is just a set of incomprehensible scientific data. But this figure, along with those from 13 other papers, is special. They lie at the heart of a high-profile scientific scandal: the downfall of a Nobel Laureate.

Unlike many other scientific fields, biology, and especially sub-cellular biology, is uniquely dependent on captured images as a primary form of data. A lot of techniques used daily in labs rely on cameras to take snapshots, whether that be of cells under the microscope, or of ‘blots’ to quantify protein levels in cells. This dependence exposes the field to a pressing problem – image manipulation.

Studies claim that over 6% of biomedical studies contain plagiarised, falsified or manipulated images. Included in this statistic are the many papers by American paediatrician and scientist Gregg Semenza, who shared the 2019 Nobel Prize in Physiology or Medicine.

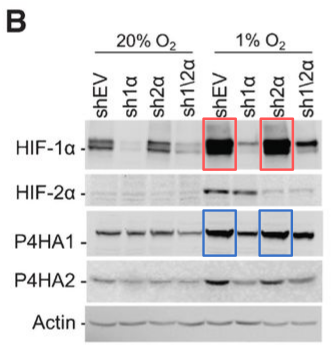

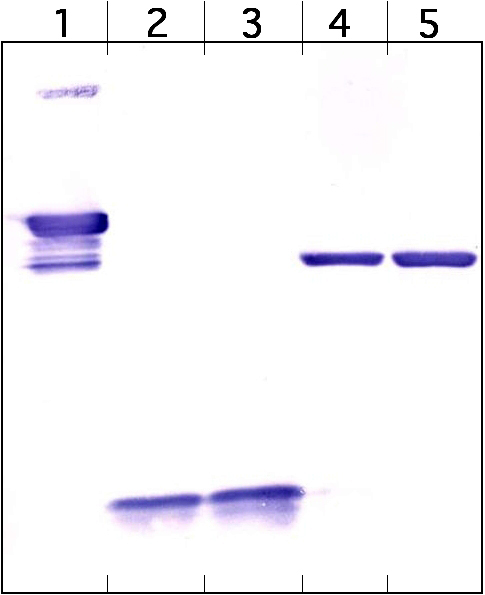

Semenza’s research focused on understanding how cells sense and react to oxygen availability. Proteins are the workhorses of the cell, with different types of proteins (of which there are thousands) performing different tasks. To understand a cellular process, such as oxygen response, one needs to identify the proteins responsible. Blots are a common technique used in this endeavour. The contents of cells are passed through a membrane which separates the proteins into various “bands”. The intensity of a band in the picture of a blot indicates the level of protein. This is a simple approach in theory, but one often encounters many challenges while running and analysing a blot – for instance, a band which should be bright may be absent, or may look deformed. The complexity arises from the large number of steps leading to the final image.

By the time he received his Nobel in 2019, Semenza had been leading his research group for over two decades. In this time, paper after paper was released from his group – PubMed, an online scientific literature tracker, reports over 300 publications between 1999 and 2019 alone. In the midst of this publishing spree came his first retraction in 2011. This could easily be dismissed as a genuine error. Four years after the Nobel was awarded, however, began the run of retractions that he is now (in)famous for. Between 2022 and today, 12 more of Semenza’s papers have been withdrawn due to image manipulation.

These retractions followed after brewing suspicions on Semenza’s research were made public on PubPeer, an online forum created to discuss published literature. Multiple users of the website, some anonymous, reported multiple irregularities in published images across a number of publications. The 13 retractions awarded to Semenza were primarily because individual bands were modified, cut out, or photocopied between different blot images. Such changes meant that the resulting images are no longer a truthful reflection of the reality in cells. In essence, this is tantamount to fraud. It is still unclear who was directly responsible for these shenanigans, since other co-authors were commonly listed on the retractions. Semenza, being the primary corresponding author, however, bears primary responsibility.

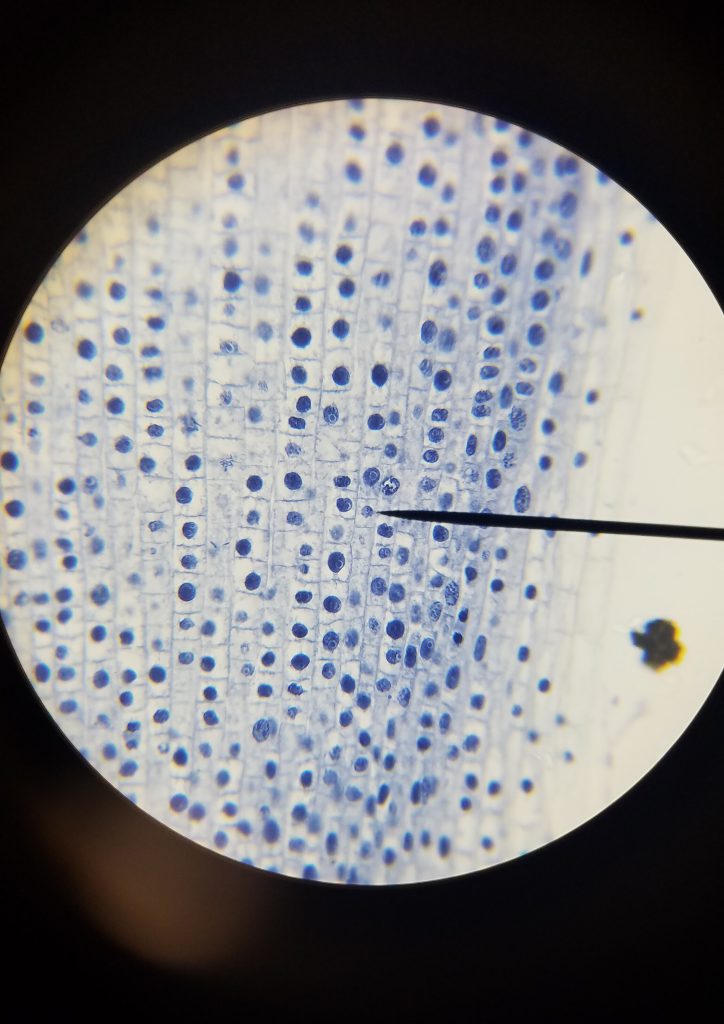

Improper manipulation extends beyond just blots. Microscopic images of cells, tissue sections, and other lines of evidence also fall prey. An example of this is a (formerly) pivotal study in the field of stem cell research, published in Nature in 2002. The paper showed that cells derived from an adult human’s bone marrow can transform into any cell type, with huge implications for treating many diseases.

The only problem? Two microscopy images of cells in the study, which were supposed to be from entirely different mice, clearly overlapped – one region of the first image was identical with another region of the second image, even though they were purportedly separate animals. Another image of a tissue section had two regions that looked duplicated. The paper was retracted in 2024, 22 years after its publication, after these concerns were pointed out on PubPeer. This makes it the most cited retracted paper of all time, with 4,491 citations before the retractions. Hundreds of research groups referred to this paper in their own research, without knowing about the problems that lay within.

Untrustworthy data poses a bigger risk than just casting doubt on a single set of conclusions. Any study in the making necessarily draws on previous research, and when this previous research is faulty, an ocean of subsequent research suddenly looks murky. Image manipulation is a huge problem in biology particularly, but shoddy science is not limited to this one field. A 2009 study reported that close to 2% of surveyed scientists admitted to falsifying data, and many consider that to be a low estimate.

Untrustworthy data poses a bigger risk than just casting doubt on a single set of conclusions

The sheer volume of such data has given birth to entire organisations dedicated to uncovering falsification in research. Sites such as Retraction Watch are chock-full with reports of suspicions and retractions. Amidst all this commotion, however, the simplest questions remain the hardest to questions remain the hardest to answer: Why are images falsified in the first place?

Not all that lustres red is blood …

Getting things to work in biological research is complex. Talk to any person who works in a lab, and you’re guaranteed to hear about an experiment not working for some or other reason. Weeks are spent in optimising protocols and making changes. After an experiment does work, it must be repeated a few times, to ensure that what one sees is true and not just a fluke. Researchers thus end up generating a huge amount of data, sometimes up to terabytes of images, of which only a small fraction is usable.

Keeping track of which image was from which experiment is thus a momentous task in and of itself. When it’s time to frame the results and write a manuscript, mistakes are understandably made. After images are generated, they need to be processed and edited to make them more facile to interpret and ready for publication. This step is where it is easiest to mess up.

“A common mistake is that students contrast images corresponding to a control group and a study group differently. This just comes naturally to us – we all have mobile phones, we take pictures, and we enhance pictures to make them look pretty. Scientifically, however, doing this to an image changes its properties, which one will [improperly] quantify later,” says Shova Maharana, Assistant Professor at the Department of Microbiology and Cell Biology, IISc.

Images are often used to infer changes in a biological system. For instance, if a drug is meant to reduce the levels of a specific protein in the cell, one can simply image cells treated with the drug and compare the intensity of the protein against untreated cells to check its function. However, since the treated and untreated cells need to be compared directly with each other, care must be taken to ensure they are processed the same way. Otherwise, the intensity from one set may falsely be increased.

What can be attributed to blunder must not be attributed to malice. Papers can be published with genuine errors, which may be flagged for suspicion down the line. These can be corrected, since almost all journals offer authors an option to submit corrections. But to say that all faulty data in the literature is simply a result of errors would be ignorant.

… but blood has a distinct taste

Contrast is a powerful tool for editing images. It can be used to simply make an image look clearer. But contrast can be used to play detective too. By playing with the colour levels of an image, one can analyse if an image has been altered substantially. A cell pasted in from another image, for instance, may not have the same background properties as the rest of the image – so when contrast is increased, it looks obviously different. And this kind of manipulation cannot simply be attributed to error.

It is easy to frame a researcher who indulges in this kind of fabrication as just another bad apple. The scale of the problem, however, suggests a more systemic issue.

“We currently live in a ‘publish or perish‘ world,” says Meetali Singh, Assistant Professor at the Department of Developmental Biology and Genetics, IISc. “A huge pressure exists to publish a certain number of papers every year. Instead of focusing on numbers, we need to focus on quality.”

Universities often have publication requirements for lab heads to continue in the institute or be promoted, which can be extremely stressful, especially in labs which experiment with novel and volatile ideas. This pressure is transmitted to students and scientists in the lab, who must also publish more to earn scholarships and positions. Even though it may be argued that pressure is necessary to ensure good science, this severe focus on metrics is unsustainable, and leads to stress, conflict, and potentially fraud.

Academic stress is often not the only source of tension. “A lot of it boils down to lab culture. Manipulation happens when the environment in the lab is not supportive, or if one is under pressure [from the lab head] to get a certain specific result,” says Shruthee*, PhD student in the Division of Biological Sciences, IISc.

While pressure is shared amongst those in a lab, so is responsibility. Technically, all co-authors of a paper share the duty of ensuring the veracity of the data, including foremost the corresponding author – usually, the head of the lab. It is extremely difficult, however, for every person involved to check all the data, due to the number of different experiments a modern biological paper usually includes.

Modern papers are also often co-authored by a large number of researchers (depending on the subfield and journal, seeing publications with 20-25 contributors is not unusual). Collaborations between specialists in different fields is the bread and butter of science today. But when misconduct takes place, the source of it becomes difficult to pinpoint.

Cleaning up the scene

Faults at multiple points – experimentation, supervision, publishing, and administration – result in unsound data and untrustworthy results. Changes, both incremental and systemic, are necessary to ensure integrity in the scientific process. This, no doubt, must start in the lab. Rigorous practices, tolerance for genuine mistakes, and harsh consequences for malpractice are essential. Early career researchers must also be trained in the ethics of research.

“As lab heads, we should not hurry students up in generating data. We must invest time in ensuring that lab members learn techniques properly, and proper mentoring is really important,” says Shova. A healthy culture at work is probably the most effective deterrent to scientific malpractice. In addition, a proactive effort must also be taken to ensure that the data published by a lab is authentic. “Before publishing, the raw data and analysis pipelines must be checked. One must be able to vouch for the data published,” she says.

While incremental improvements are imperative, they can only hold ground when accompanied by systemic reform. At the forefront of this are organisations such as India Research Watch (IRW).

“Researchers are judged based on the number of publications and citations. Chasing such metrics results in [falsification],” says Achal Agrawal, founder of IRW. “We have to stop being lazy. We have to move away from the convenience of simple metrics if we want to incentivise quality work.” Pressure from IRW and other groups led to the NIRF rankings, published by the Government of India, penalising universities with many retractions.

‘Researchers are judged based on the number of publications and citations. Chasing such metrics results in [falsification]’

It is clear that this is not enough. As more money continues to pour into technology and artificial intelligence, generating falsified images is easier than ever. Bands in a Western blot, images of bacterial plates, and even entire microscopic images can be generated just by submitting a prompt.

Even then, a sense of cautious optimism prevails. “If we can use AI to make images, we can use AI to detect fraudulent images. And I’m sure that technology will come soon,” says Shova.

The future tests the past

The trustworthiness of science depends on the belief that even if faulty conclusions are drawn, they will be tested by future research in the field. For particularly exciting results, scientists often jump on the case immediately, trying to replicate the results in their own lab. If it works, confidence is built in the result. If not, eyebrows are raised.

A well-known case of this kind of sleuthing paying off happened in stem cell research. Stem cells are cells that can become any type of cell in the body, a capability that does not exist in most cells of an adult. Obtaining stem cells from a grown human is the holy grail of regenerative medicine, since they can technically be used to treat defects in any organ, without dealing with the complications of transplants and other procedures. The field of stem cell therapy often makes headlines. A lot of the time, however, this is for the wrong reasons. Fraud is exceedingly common in this line of research.

In 2014, two papers were published in Nature, both from a lab in the Riken Center for Developmental Biology (CDB) in Kobe, Japan. The papers essentially claimed that an acid bath is enough to convert normal cells into stem cells. This was monumental, and biologists from across the world rushed to replicate this. Worryingly, no one was able to. Along with allegations of plagiarism and image manipulation, this prompted both papers to be withdrawn within just a few months, sending the institute and field into disarray.

It is often argued that this incident is proof of science working as intended, with fraud being revealed as a result of more research. This obfuscates the fact that only the most exciting results ever get looked at again. Most scientific results do not get validated for reproducibility, and this has led to what is called a “replication crisis“.

Solving this crisis only requires simple solutions. For instance, more roles aimed specifically at ensuring the authenticity of previous experiments, through data validation and reproducing them, must be established. However, bringing these changes to fruition necessitates political will and funding.

“We do not have a dearth of talent. There are currently more qualified individuals than there are positions available in academia. If the funding becomes available, I’m sure people would be interested in taking such positions,” explains Meetali.

Scientists who have their data retracted do not have it easy. Any future research conducted by the lab will be surrounded by a cloud of suspicion, even if it is scientifically sound. Retractions have a ripple effect – other related articles are cited less when a retraction occurs, and the entire field of study may receive less funding.

In an era of increasing scepticism, decreased funding, and more opportunity to do the wrong thing, one can only hope that these repercussions are not outweighed by a growing pressure to perform. As the old adage goes, science is self-correcting. Only time can tell if this saying is dead in the water.

*The name of the student has been changed to protect their privacy.

Kedhar R Thyagarajan is a fourth year Bachelor of Science (Research) student at IISc and a science writing intern at the Office of Communications

(Edited by Abinaya Kalyanasundaram)